Beyond the Bot Why Your High-Stakes Content Needs an Aviation-Grade Fact-Checking System

AI Summary

Automated fact-checking for high-stakes, data-intensive content demands more than standard AI tools that often hallucinate or miss source credibility, creating risks rather than solutions. This article explores aviation-inspired architectures that layer verification, integrate source intelligence, and require human oversight to build trust rather than just speed.

- Why standard AFC systems suffer from hallucination and lack source intelligence, making them unreliable for critical content.

- How resilient hybrid architectures use multiple independent verification layers, source weighting, and immutable audit trails.

- The essential role of human-in-the-loop design as a permanent feature to ensure accuracy and accountability.

For organizations responsible for critical data integrity seeking to design fact-checking systems built for trust and verifiable accuracy rather than automation alone.

You just signed off on the annual investor report. Every number, from revenue growth to market share projections, has been checked. But what was the process? A junior analyst pulling data from three different spreadsheets, a senior manager spot-checking the executive summary, and a final proofread for typos. The system is based on trust and manual effort. Now, imagine automating that.

The promise of Automated Fact-Checking (AFC) is immense. AI systems can scan documents, extract claims, and verify them against data sources in seconds. For organizations that publish high-stakes content like financial reports, scientific research, or regulatory filings, this seems like the solution to scaling accuracy.

But there is a paradox. The very AI that promises to eliminate human error introduces new, more subtle failure points. Treating AFC as a simple content moderation tool is a critical mistake. For high-stakes information, you do not need a content bot. You need an architecture built on the same principles that keep airplanes in the sky.

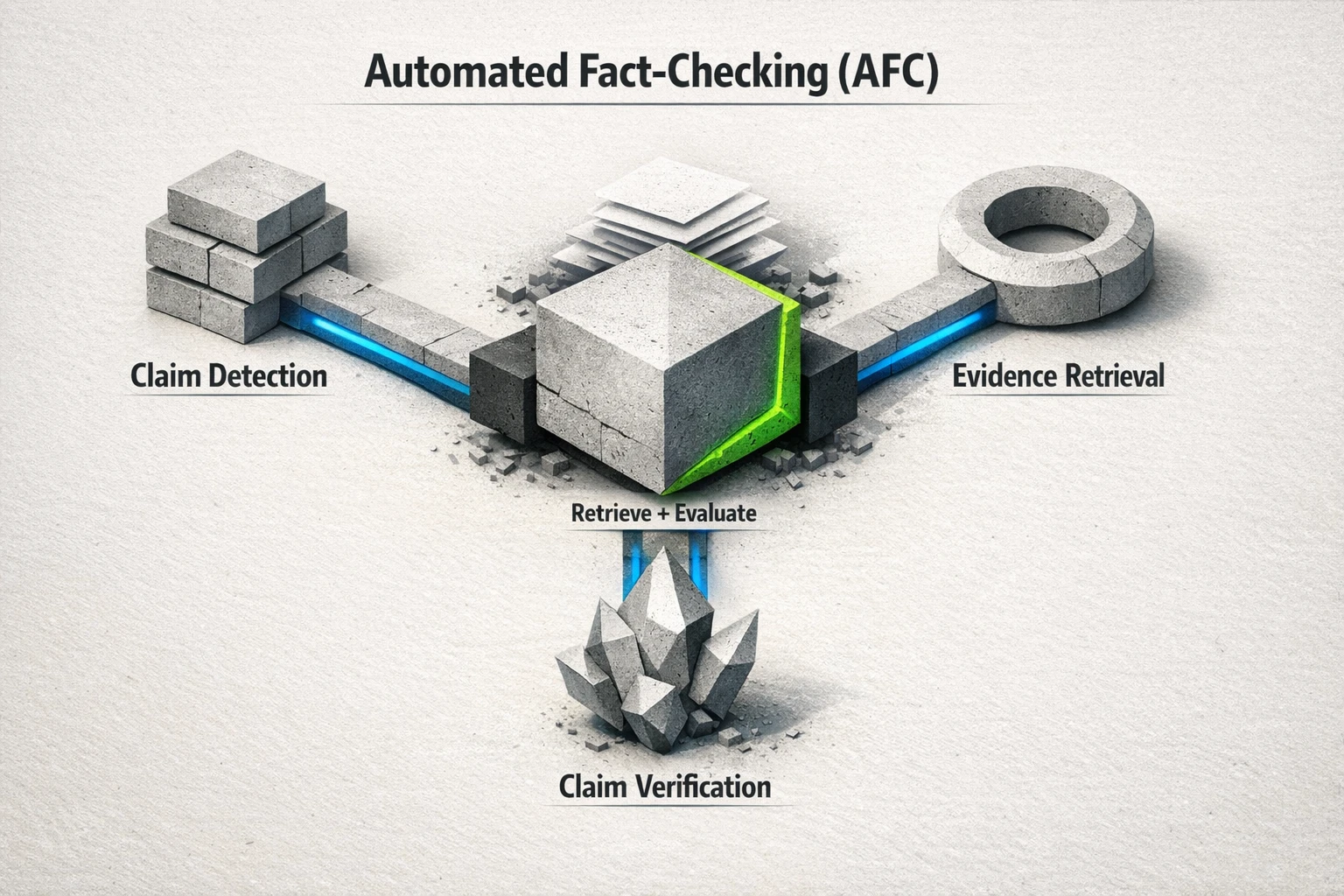

AFC systems typically decompose into claim detection, evidence retrieval, and claim verification—retrieving and evaluating evidence from diverse sources to assess a claim’s veracity.

The High-Stakes Flaw: Why Standard AFC Fails

Most AFC systems follow a simple, logical pipeline: find a claim, search for evidence, and render a verdict. This is fine for flagging questionable social media posts. It is completely inadequate for verifying a multi-billion dollar earnings report.

The problem is that standard AFC architectures have two fundamental blind spots that are fatal in high-stakes environments.

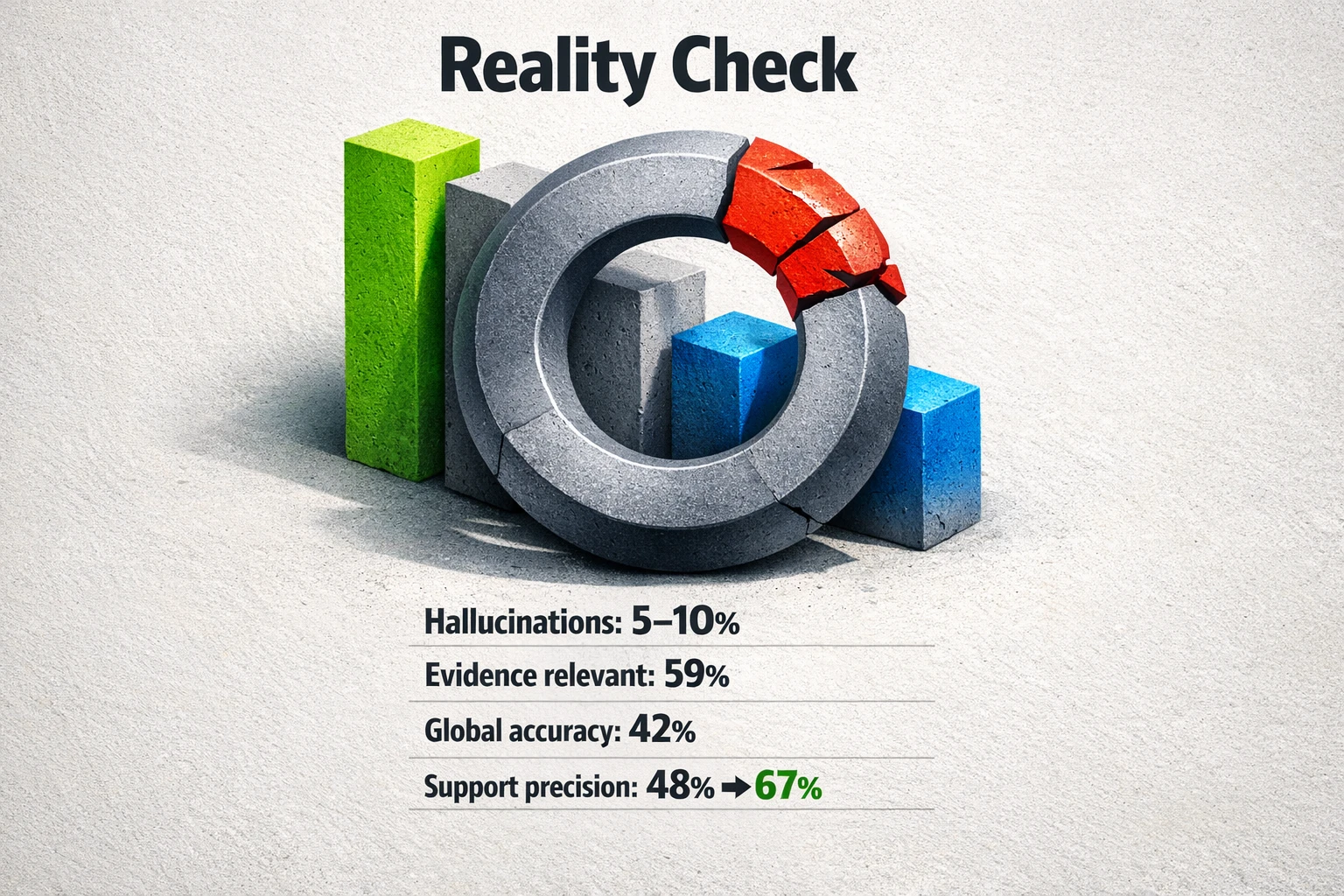

First, they are vulnerable to sophisticated falsehoods. Today’s large language models are designed to be fluent, not factual. This leads to what researchers call "hallucinations," where the AI generates text that is grammatically perfect but factually incorrect or entirely made up. Studies of even advanced models show they produce inaccurate factual statements in approximately 5 to 10 percent of responses to general knowledge queries [1]. When your system is built on this foundation, a single hallucinated data point can corrupt an entire analysis.

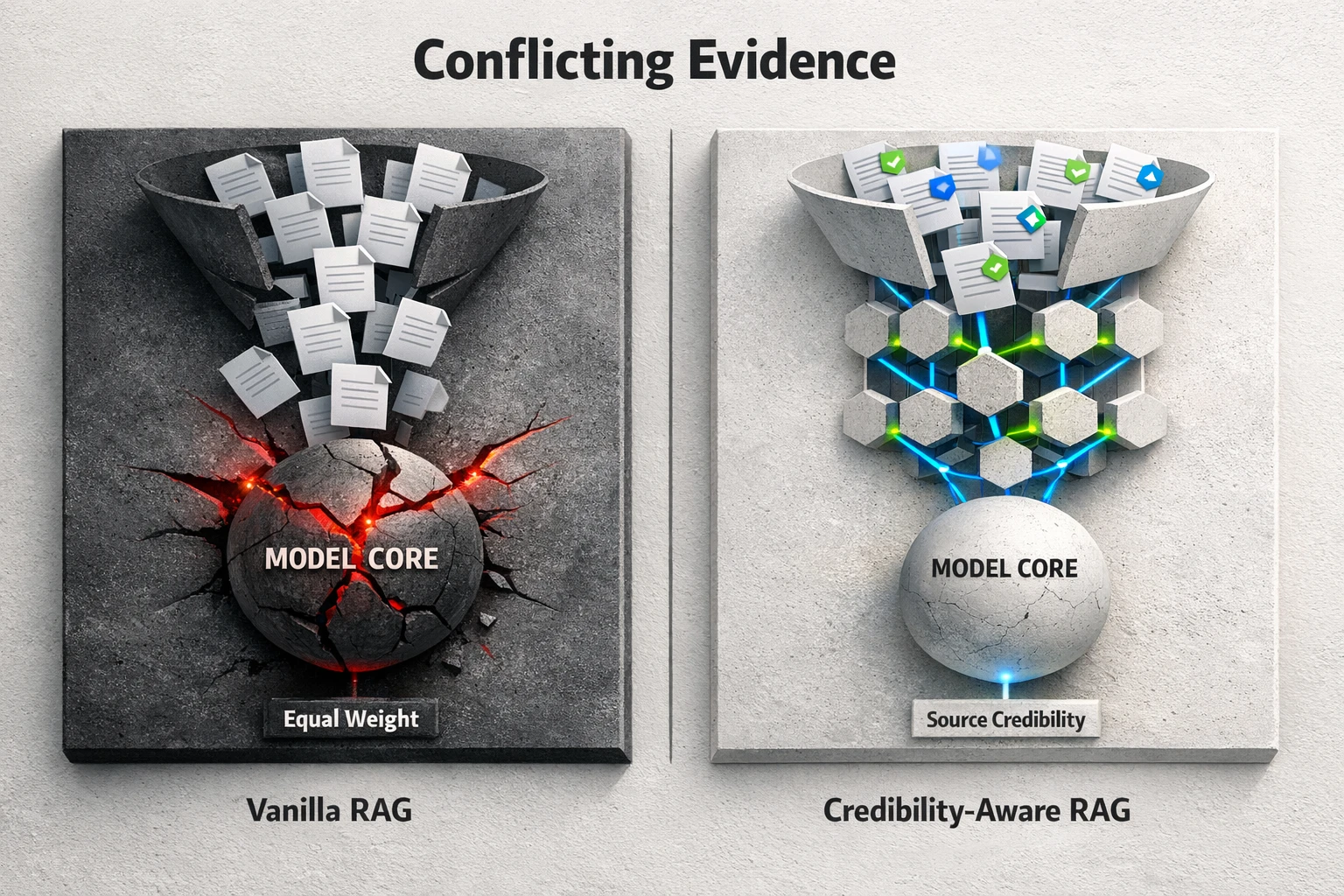

Second, these systems lack source intelligence. A retrieval-augmented generation (RAG) model pulls information from a knowledge base to answer a question. But it often treats a press release, an anonymous forum post, and a peer-reviewed study as equally valid sources of information. This is a critical failure. Research shows that these models struggle significantly with conflicting evidence when they do not explicitly consider the credibility of the source [2]. In a world of deepfakes and targeted misinformation, an architecture that cannot distinguish between authority and noise is a liability.

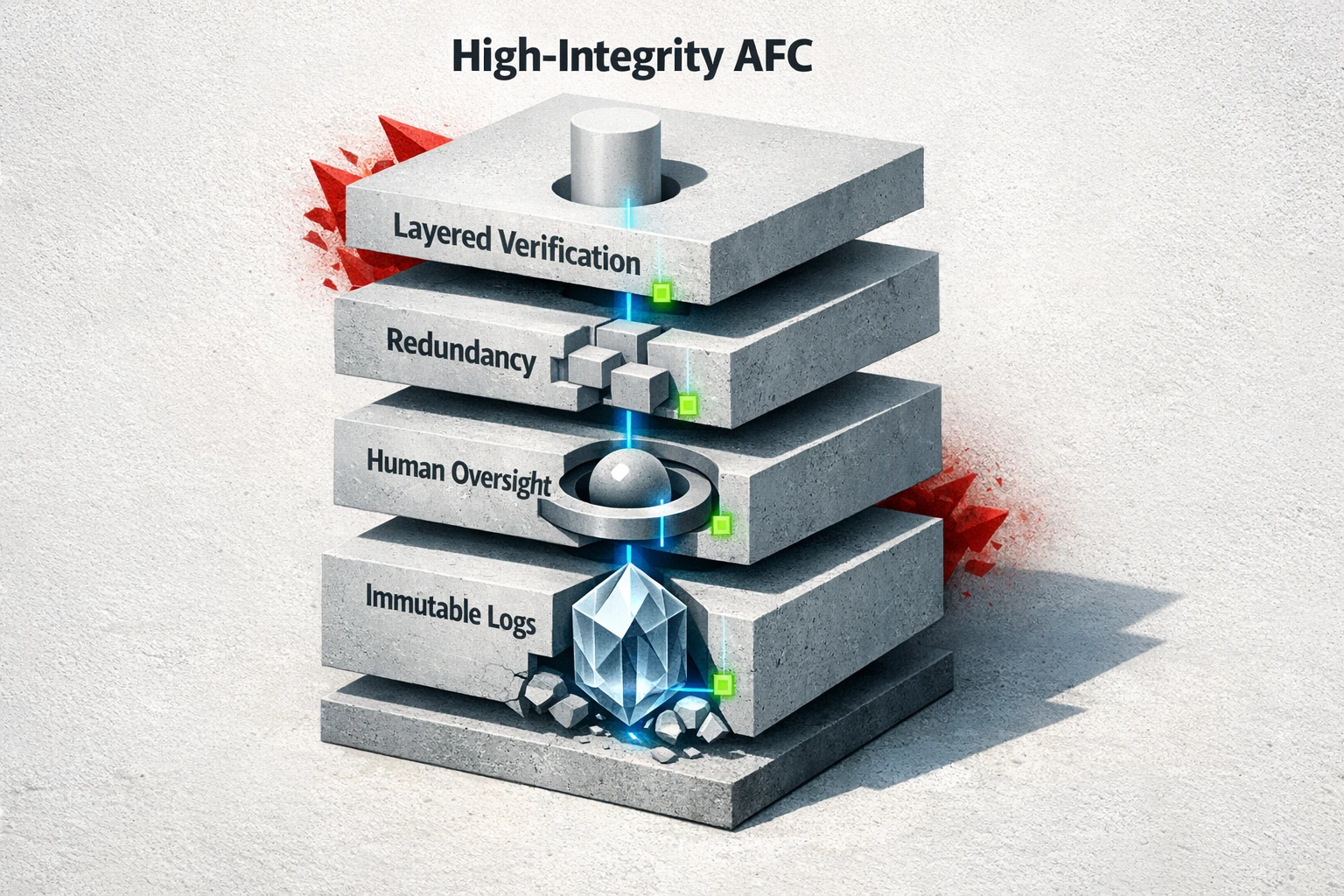

High-stakes AFC needs aviation-style safety thinking: multiple independent verification layers, redundancy, immutable audit trails, and explicit human oversight rather than full autonomy.

A Better Blueprint: The Resilient Hybrid Architecture

To build a fact-checking system you can trust with critical data, we need to look at industries where the cost of failure is non-negotiable, like aviation. The aviation industry’s approach to safety provides a powerful model for high-integrity AFC. It is not about trusting a single pilot or a single system. It is about building a resilient architecture with multiple layers of defense.

Here are the core principles of a resilient hybrid AFC architecture.

1. Layered Verification, Not a Single Check

An airplane does not rely on one sensor. It uses multiple, independent systems that cross-check each other. A high-stakes AFC architecture must do the same. Instead of one monolithic verification step, it should use a cascade of checks. This could include a statistical model to check for numerical anomalies, a knowledge graph to validate relationships between entities, and a natural language model to check textual claims. A red flag from any single layer forces a deeper review.

2. Source Intelligence, Not Just Retrieval

Your system must be designed to explicitly weigh the credibility of evidence. This goes beyond simple retrieval. It means building a source scoring module directly into the architecture. A report from a regulatory body is assigned a higher trust score than a self-published analysis. When evidence conflicts, the system can automatically favor the more credible source or, more importantly, flag the conflict for human review. This design directly counters the "credibility blind spot" of standard RAG models.

Retrieval systems can fail when conflicting sources are treated equally. Adding explicit source credibility and weighting can help, while naive “filter everything” approaches may backfire.

3. Immutable Audit Trails

When an aviation incident occurs, investigators retrieve the flight data recorder, or "black box." It provides a tamper-proof record of every action and system reading. A high-stakes AFC system requires the same level of auditability. Every claim that is processed, every piece of evidence retrieved, every verification step, and every human intervention must be logged in an immutable ledger. This creates an auditable trail that allows you to prove provenance and deconstruct any error to its source.

Human Oversight Is Not a Flaw, It's the Feature

The goal of this architecture is not to build a fully autonomous fact-checker. The goal is to build the world's most powerful assistant for a human expert. The final authority must remain with a person.

This human-in-the-loop design is not a temporary crutch while the AI gets better. It is a permanent and necessary component of any system that deals with high-stakes truth. The German news organization Der Spiegel Group demonstrated this with their experimental AI tool. It was not built to replace journalists. It was built to accelerate their work by extracting claims and assigning confidence scores, flagging the most dubious items for human fact-checkers to investigate [3]. The AI does the grunt work so the human can focus on the hard work of critical judgment.

The data on system performance confirms this necessity. In one evaluation of an agentic fact-checking system, its global accuracy in classifying claims was only 42% [4]. That is worse than a coin flip. Full automation is not just unrealistic; it is irresponsible.

Empirical results show why high-stakes AFC must be hybrid: hallucinations persist, and end-to-end accuracy can lag even when evidence retrieval improves after human relevance filtering.

Your First Step: Design for Trust, Not Speed

Automating the verification of high-stakes content is possible, but it requires a fundamental shift in thinking. You are not building a content tool. You are engineering a system for trust.

The first step is not to evaluate AI vendors. It is to map your current verification process. Identify every manual checkpoint, every source of truth, and every point where human judgment is currently applied. That map becomes the blueprint for your resilient hybrid architecture, showing you where to augment your experts, not where to replace them.

Frequently Asked Questions

What is the main difference between standard AFC and a high-stakes system?

Standard AFC prioritizes speed and scale, often for moderating content like social media posts. A high-stakes system prioritizes verifiable accuracy, auditability, and reliability. It uses a more complex architecture with layered verification, source credibility analysis, and mandatory human oversight for critical decisions.

Can AI ever fully automate fact-checking for critical documents?

It is unlikely in the near future. AI models are probabilistic and prone to errors like hallucination. For high-stakes content where a single error can have severe consequences, the final judgment and accountability must rest with a human expert. The AI's role is to be a powerful assistant, not an autonomous decision-maker.

Where do most AFC projects go wrong?

They go wrong by underestimating the complexity of "truth." They often start with a simplistic model that works for basic claims but fails when faced with conflicting evidence, biased sources, or the need for deep contextual understanding. A successful project starts by designing for the hardest cases, not the easy ones.

What does a "human-in-the-loop" actually do in this system?

A human expert acts as the final arbiter. The AFC system flags claims that are uncertain, have conflicting evidence, or rely on low-credibility sources. The human expert then reviews the evidence curated by the AI, applies their domain knowledge to interpret context, and makes the final verification decision. They also provide feedback that helps improve the system over time.

Sources:

- IJCAI - Research on the frequency of factual inaccuracies and hallucinations in large language models.

- IJCAI - Analysis of retrieval-augmented generation model limitations when handling conflicting evidence without source credibility.

- Journalists.org - Case study detailing the experimental AI verification tool developed by Der Spiegel Group.

- Emergent Mind - Performance evaluation results from an agentic fact-checking system tested with professional journalists.