Ethical AI in Marketing: A Practical Framework for Building Unbreakable Consumer Trust

AI Summary

Ethical AI in Marketing presents a clear, actionable framework to help marketers close the growing trust gap with consumers by implementing responsible AI practices.

Bottom Line:

Adopting ethical AI strengthens customer loyalty and mitigates risks by embedding transparency, fairness, and accountability into AI-powered marketing.

What You'll Learn:

• How to assess and plan for ethical AI adoption with risk audits and policies

• Steps to govern AI responsibly through data stewardship and human oversight

• Techniques for ongoing monitoring to ensure compliance and bias mitigation

Best For:

Marketers and business leaders seeking to implement trustworthy AI strategies that enhance consumer relationships and regulatory compliance.

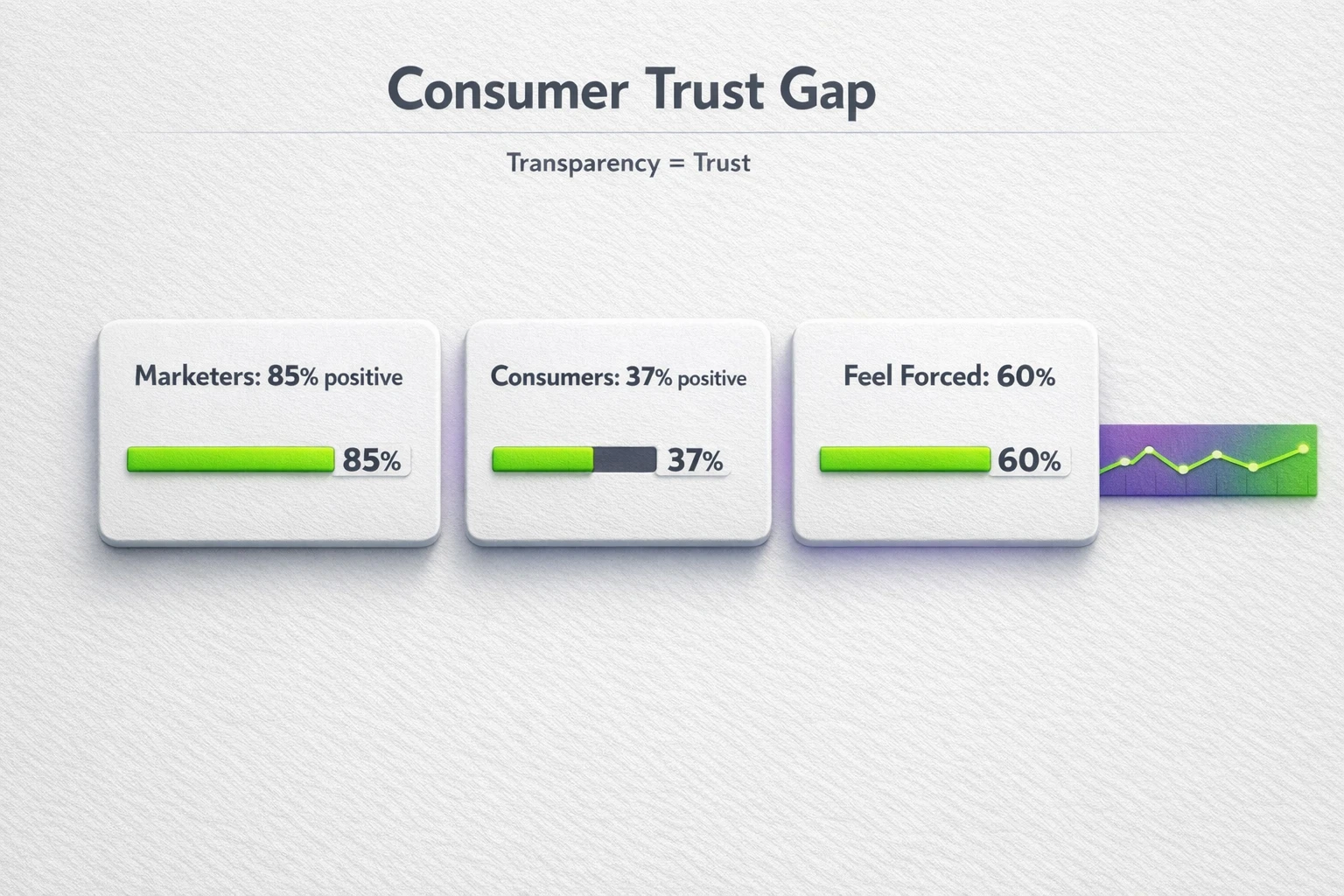

The rush to adopt AI in marketing is undeniable. A staggering 69% of marketers have already integrated AI into their operations. The problem is, this enthusiasm isn't always shared by the people who matter most: your customers. While 85% of marketers believe consumer sentiment toward AI is positive, the reality is starkly different. Only 37% of consumers actually feel positive about these interactions.

This isn't just a perception issue. It's a trust crisis in the making. With 60% of consumers feeling forced to use a brand's AI, the path of least resistance leads to alienation, not loyalty. In an era of declining trust in online information, simply deploying AI isn't enough. You must deploy it ethically. This isn't about regulatory box-checking. It's about building the single most valuable asset your business can have: lasting consumer trust.

The Widening Trust Gap: Why Your AI Strategy Might Be Backfiring

The disconnect between marketer perception and consumer reality is a critical vulnerability. Pushing AI-driven experiences on an unprepared or unwilling audience erodes the very foundation of the customer relationship. This is where a reactive approach to ethics fails. Waiting for a privacy complaint or a biased campaign to go viral is a strategy for disaster, especially when GDPR non-compliance can lead to fines of up to 4% of your annual global revenue.

Transparent, human-centric AI isn't a lofty ideal. It's a strategic imperative that directly impacts customer loyalty and your bottom line.

A concise metrics snapshot quantifying the consumer trust gap — use this to show urgency and make the case for transparent, human-centered AI practices.

Decoding the DNA of Trust: The Pillars of Ethical AI

To move beyond theory, you need a clear model. Most established ethical frameworks, from UNESCO to IBM, converge on five core principles. For marketers, these aren't abstract concepts. They are the building blocks of a resilient, customer-centric strategy.

1. Fairness and Inclusivity

This is about actively preventing your AI from perpetuating harmful stereotypes or excluding specific demographics. Algorithmic bias can creep into ad targeting, content personalization, and even lead scoring, creating inequitable experiences and alienating entire customer segments.

2. Transparency and Explainability

If you can't explain how your AI reached a decision, you can't earn trust. This means moving away from "black box" systems. Marketers need to understand and be able to communicate why a customer was shown a specific ad or content recommendation, ensuring clarity and accountability.

3. Data Privacy and Security

This goes beyond basic compliance. Ethical AI demands a proactive approach to data stewardship. It involves data minimization, collecting only what is necessary, and giving consumers clear control over their personal information. It’s about treating customer data as a loan, not an asset.

4. Accountability and Human Oversight

Technology is a tool, not a replacement for human judgment. True accountability means establishing clear lines of responsibility for AI-driven outcomes. A human must always be in the loop, ready to intervene, correct, and override automated decisions, especially in sensitive applications.

5. Reliability and Robustness

Your AI systems must perform consistently and safely. This involves rigorous testing to protect against manipulation, ensuring the system is secure from external threats, and having clear protocols for when the AI fails or produces unexpected results.

Navigating the Minefield: Common Ethical Pitfalls in AI Marketing

Applying these pillars requires vigilance. Many well-intentioned teams stumble when deploying AI, often falling into predictable traps that damage brand reputation.

The Challenge of Algorithmic Bias: An e-commerce platform's recommendation engine might inadvertently promote higher-margin products to specific demographics, creating an unfair shopping experience. Similarly, an AI-powered ad platform could learn to associate certain job roles with specific genders, illegally limiting opportunity and reach. Mitigating this requires diverse training data, regular audits, and using tools like IBM's AI Fairness 360 to detect and correct bias.

The Illusion of Consent: Many businesses collect vast amounts of data under vague terms of service, creating a significant privacy risk. Hyper-personalization can quickly cross the line from helpful to intrusive if it's based on data the consumer didn't knowingly provide for that purpose. The solution is robust consent management, clear communication, and giving users easy-to-use opt-out mechanisms.

The Rise of Deceptive Content: As AI-generated content becomes indistinguishable from human work, the potential for deception grows. Using AI to create "soulless" blog posts or deepfake testimonials is a short-term tactic that destroys long-term credibility. The ethical path involves mandatory labeling of AI-generated content and maintaining strong human oversight to ensure authenticity and quality.

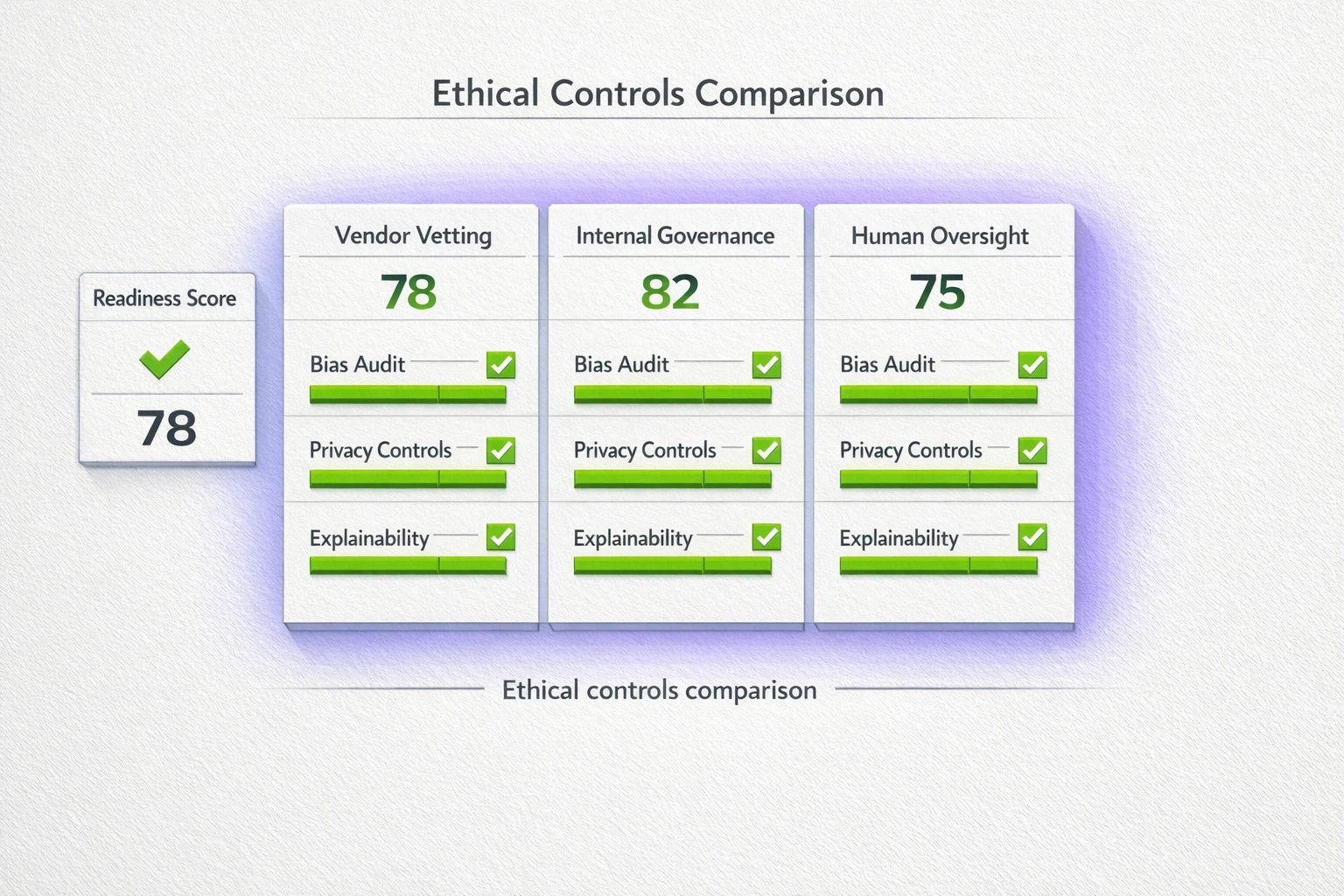

A side-by-side readiness matrix that helps evaluators compare vendor and internal ethical controls quickly, highlighting where to prioritize governance investments.

The PageBody AI Framework: Your Actionable Path to Responsible AI

Moving from principles to practice requires a structured approach. At PageBody AI, we guide our partners through a three-phase framework designed for practical implementation within marketing teams. This transforms ethical AI from a vague goal into a clear, manageable process.

Phase 1: Assess and Plan

You cannot fix what you do not measure. The first step is a comprehensive audit of your current and planned AI systems.

- Conduct AI Ethics Audits: Evaluate your models for bias, review data sources, and map potential risks to your customers and brand.

- Develop Clear AI Use Policies: Create internal guidelines that define acceptable and unacceptable uses of AI in marketing. This document should be the source of truth for your entire team.

- Implement Risk-Tiered Approvals: Not all AI is created equal. A low-impact internal tagging system requires less scrutiny than a high-impact AI model that determines ad spend and customer segmentation. Classify your systems and establish appropriate levels of review.

Phase 2: Implement and Govern

With a clear plan, you can build the infrastructure for responsible AI.

- Establish Robust Data Governance: Implement strict controls over data lineage, consent records, and PII management. When working with vendors, demand transparency and mandate bias testing as part of your service agreements.

- Ensure Human-in-the-Loop Oversight: Define clear intervention points where a human expert must review and approve AI-driven decisions. This is non-negotiable for customer-facing applications.

- Build for Explainability: Document your models using tools like Model Cards. This practice creates an audit trail and forces your team to understand and articulate how an AI system works, making it transparent for both internal stakeholders and external regulators.

Phase 3: Monitor and Adapt

Ethical AI is not a one-time project. It is an ongoing commitment to improvement.

- Continuous Bias and Drift Monitoring: Models degrade over time. Implement automated systems to constantly test for performance drift and re-emerging bias, with clear protocols for retraining or rolling back a model if it crosses an ethical boundary.

- Regular Compliance Checks: The regulatory landscape is constantly changing. Schedule periodic reviews to ensure your practices align with new laws like the EU AI Act and emerging US state privacy regulations.

- Upskill Your Team: Foster a culture of ethical awareness through ongoing training. Responsible AI is a cross-functional effort that requires collaboration between marketing, data science, and legal teams.

An actionable ethical AI framework designed for marketers - compare maturity across Assess, Govern, and Monitor to choose immediate next steps.

Implementing this framework is the most direct way to close the trust gap. By building ethics into the foundation of your AI strategy, you transform it from a potential liability into a powerful competitive advantage. pageBody AI's solutions, including our AI-driven SEO Strategist, are built on these principles, ensuring our clients can innovate with confidence.

Frequently Asked Questions

Isn't ethical AI just another compliance burden?

No. While compliance with regulations like GDPR is essential, true ethical AI is a strategic advantage. Brands that proactively build trust and demonstrate responsibility will attract and retain more loyal customers, differentiating themselves in a crowded market. It's about long-term brand equity, not just short-term risk avoidance.

How can a small business implement such a complex framework?

You don't have to boil the ocean. Start by focusing on your highest-risk AI applications, like ad targeting or customer personalization. Develop a simple AI use policy and prioritize transparency in all customer communications. Partnering with a provider like PageBody AI allows you to leverage enterprise-grade ethical governance without needing a large internal data science team.

What is the real ROI of investing in ethical AI?

The ROI comes from both risk mitigation and growth opportunities. On the mitigation side, you reduce the likelihood of costly regulatory fines and reputational damage from a biased or intrusive campaign. On the growth side, you build deeper customer trust, which leads to higher loyalty, increased customer lifetime value, and a stronger brand that people are proud to associate with.

How does PageBody AI ensure its own systems are ethical?

Our systems are designed with a "human-in-the-loop" philosophy. For example, our SEO Strategist provides intelligence and analysis, but the final strategic decisions are always guided by human expertise. We prioritize data minimization, build our models on diverse and vetted data sources, and maintain transparent processes that allow our clients to understand how our AI arrives at its recommendations.

Sources:

- IAPP - Data on AI adoption rates in marketing.

- Harvard Professional Development - Foundational concepts on the core challenges of AI ethics.

- UNESCO - Global standard-setting principles for ethical AI.

- Digital Marketing Institute - Insights on the marketer's role in implementing ethical AI.

- IBM AI Ethics - Core pillars and industry perspectives on AI governance.

- Silverback Strategies - Best practices for implementing ethical AI as a business strategy.

- Brookings Institution - Frameworks for detecting and mitigating algorithmic bias.

- PwC - Analysis of evolving US state privacy laws and regulations.