AI Content Quality is a Myth. A Verification Protocol is the Reality.

AI Summary

AI content quality assurance is not about polishing drafts but about installing a rigorous verification protocol that turns risky AI output into reliable, trust-building assets. This piece breaks down a practical, four-stage workflow any team can adopt and scale.

- The 4-stage protocol covering foundational fact-checking, brand and editorial alignment, risk and responsibility auditing, and final human-in-the-loop approval

- How the protocol operationalizes E-E-A-T and AI governance frameworks like NIST to reduce hallucinations, bias, and compliance risks while scaling content

- Ways to use confidence scores and feedback loops so each review cycle trains your prompts and processes, pushing accuracy from ~80–85% to over 95%

For teams relying on AI content but worried it’s quietly eroding trust, not growing authority.

Everyone is racing to scale content with AI. The problem is, they are shipping AI's first draft. This raw output is riddled with errors, brand inconsistencies, and subtle biases. The web is now flooded with generic content, and your audience is growing skeptical.

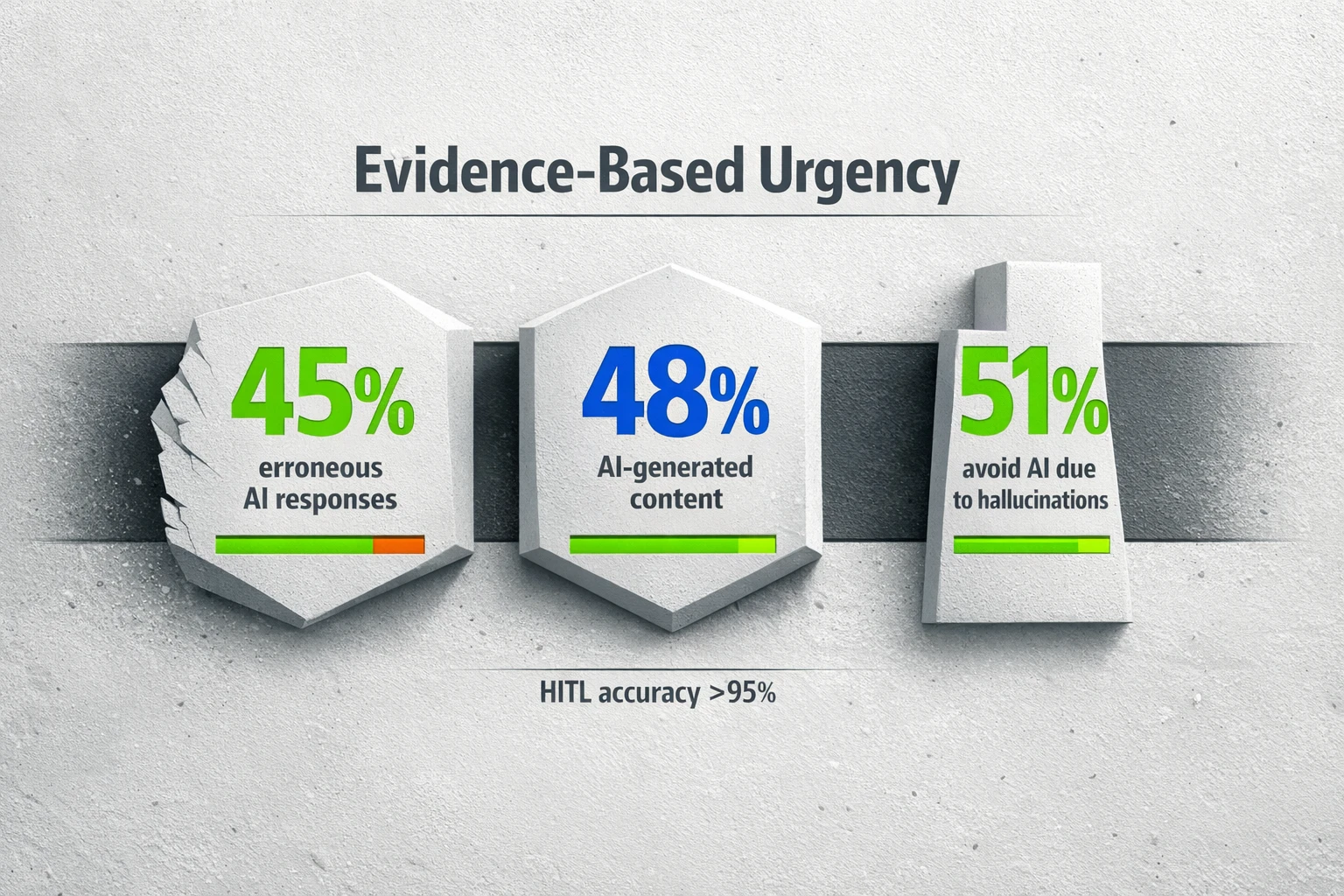

The scale is staggering. By 2025, AI-generated articles swelled to make up nearly 48% of web content [1]. Yet the quality remains a serious risk. A joint study by the BBC and the European Broadcasting Union found that 45% of AI query responses on news topics contained factually incorrect information [2]. This isn't just a technical problem. It is a trust problem. A recent UK survey showed 51% of students avoid using generative AI because they fear getting false results [3].

If you want to use AI to build authority, you cannot afford to publish content that undermines your credibility. A simple checklist is not enough. You need a formal, repeatable verification protocol.

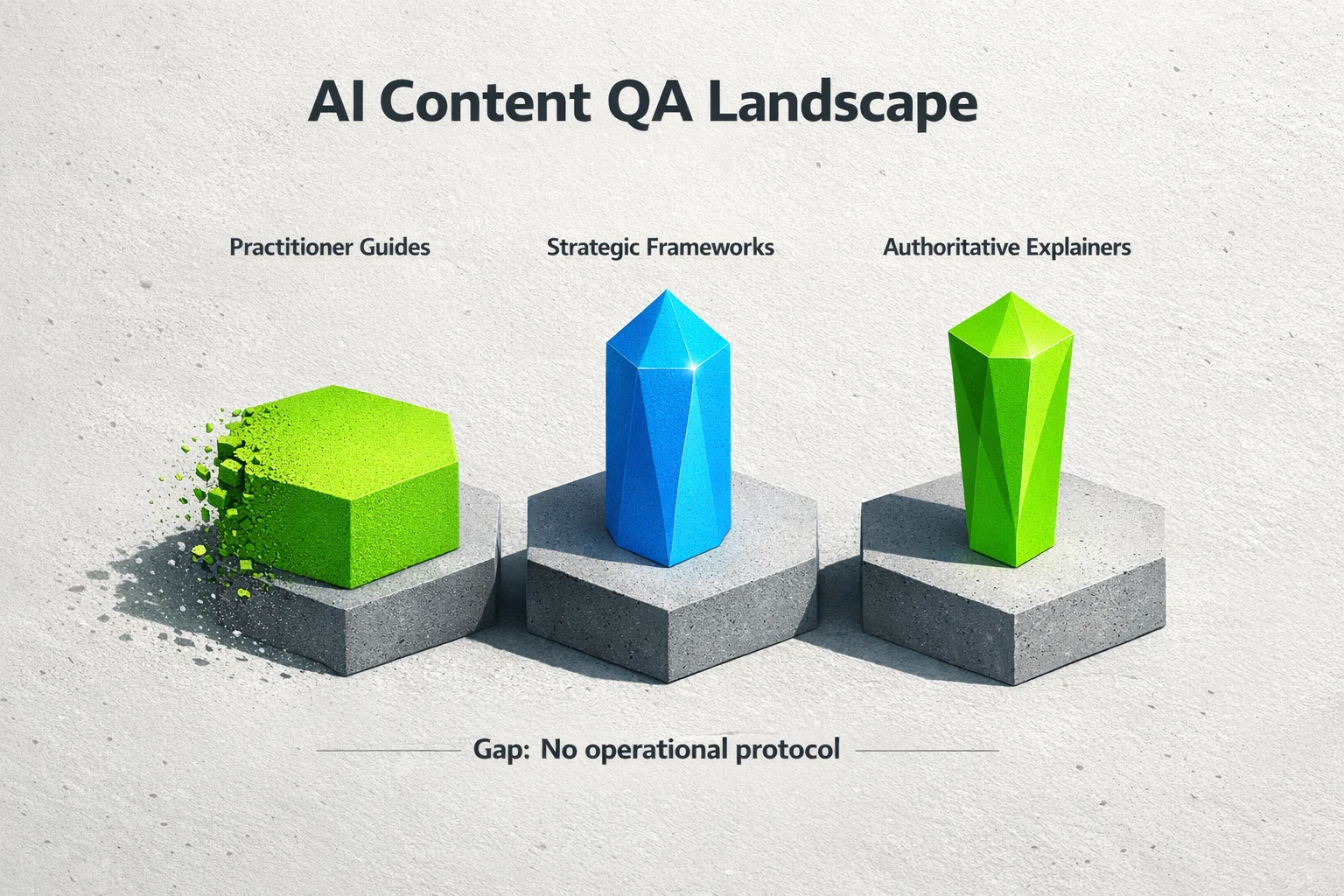

What is an AI Content Verification Protocol?

An AI content verification protocol is a standardized, multi-stage workflow for ensuring AI-assisted content is accurate, original, on-brand, and safe to publish. It moves your team from an informal "looks good to me" review to a structured, defensible process.

Think of it as the bridge between raw AI output and content that actually builds authority. Simple editing checklists focus on grammar and style. A protocol addresses deeper issues like factual integrity, originality, and risk mitigation. It’s the operational layer that turns a powerful tool into a reliable business asset.

The 4-Stage AI Content Verification Protocol

A robust protocol gives your team a clear, step-by-step process. It creates consistency and ensures no critical checks are missed. Instead of hoping for quality, you engineer it.

Stage 1: Foundational Check (Accuracy & Originality)

This is the non-negotiable first pass. Before you spend a minute on style, you must confirm the content is factually sound and original.

- Systematic Fact-Checking: Verify every statistic, date, and claim. Best practices include prompting the AI for its sources and then cross-referencing those claims with primary sources like research institutions or government websites [4]. Never trust a number without verification.

- Plagiarism Detection: Run the text through a reliable plagiarism checker. While AI doesn’t typically copy and paste, it can generate text that is substantially similar to its training data. This check protects you from unintentional infringement.

- Source Verification: If the AI provides links or sources, check them. Do they exist? Do they actually support the claim being made? Broken or irrelevant links are a major red flag.

Stage 2: Brand & Editorial Alignment

Here, you transform generic AI text into content that sounds like you. This is where you inject your company’s unique perspective and voice.

- Voice and Tone: Edit the content to align with your brand's style guide. Correct any phrases or terminology that feel off-brand.

- Human Touch: Remove common AI giveaways like robotic rhythms, excessive clichés, and bland, generic statements [5]. Rewrite for clarity and flow, ensuring the content is engaging for a human reader.

- Add Unique Experience: This is critical for building credibility. Your team's firsthand experience and unique insights are what separate valuable content from commodity articles. This is a core component of demonstrating genuine expertise and building trust through your content, a key aspect of E-E-A-T in the AI era.

Stage 3: Risk & Responsibility Audit

This stage is about protecting your brand and your audience. You are looking for subtle issues that automated tools often miss.

- Bias Screening: Review the content for subtle and overt biases related to gender, race, age, or other characteristics. AI models can inadvertently perpetuate stereotypes found in their training data.

- Ethical Review: Check for any harmful advice, dangerous stereotypes, or ethically questionable content. This is especially important for Your Money or Your Life (YMYL) topics like finance and healthcare. If you operate in this space, understanding E-E-A-T for AI content in regulated industries is not optional.

- Compliance Check: Ensure the content adheres to industry regulations and legal standards, including disclosure requirements or specific terminology.

Stage 4: Final Approval & Human-in-the-Loop Feedback

The final gate before publishing requires a definitive human sign-off. This is the moment of ultimate accountability.

- Senior Review: A subject matter expert or senior editor should perform the final review and grant approval. This person is accountable for the content's quality and accuracy.

- Feedback Loop: This is an advanced but powerful step. Document the corrections and edits made during the QA process. Use this feedback to refine your prompts and instructions for the AI on future tasks. A strong workflow with human in the loop AI quality assurance is essential for continuously improving output.

From Protocol to Governance: Scaling Your Quality Framework

A documented protocol does more than just improve your content. It becomes the foundation of your entire AI governance strategy.

When you have a formal process, you can build a scalable and defensible system for creating high-quality content. This protocol is the practical application of high-level frameworks like the NIST AI Risk Management Framework, which provides guidance on how to govern, map, measure, and manage AI systems responsibly [6]. Advanced teams can take this even further. They can use AI confidence scores to automate parts of the review. For example, any output with a confidence score below 90% can be automatically flagged for mandatory human review [7]. This human-in-the-loop system is proven to improve AI model accuracy from a baseline of 80-85% to over 95% [7].

A Protocol Isn't an Obstacle. It's Your Competitive Edge.

Many teams see quality assurance as a bottleneck that slows down AI's speed. This is the wrong way to think about it. Speed without quality is just a faster way to damage your brand's reputation.

A verification protocol is how you harness AI's power responsibly. It allows you to scale content production without sacrificing the trust you've worked so hard to build. Google’s own guidance rewards "high-quality content, however it is produced," emphasizing original, helpful, people-first content that demonstrates expertise and trustworthiness [8]. An effective protocol is the engine that produces exactly that.

This systematic approach is what separates a true SEO intelligence strategist from tools that just generate text. It’s not about writing more articles. It’s about building a library of authoritative assets that win trust and rankings.

Frequently Asked Questions

How long does a proper AI QA process take?

The time varies with content complexity and length. A short, non-technical article might take 15-30 minutes for a trained editor. A long-form piece on a sensitive topic could take over an hour. The key is to bake this time into your workflow, rather than treating it as an afterthought. It's faster than writing from scratch but requires a real time investment.

Can't I just use a plagiarism and grammar checker?

No. Those tools are part of Stage 1 and 2, but they are insufficient on their own. They cannot verify factual accuracy, detect subtle bias, check for brand voice alignment, or add unique human experience. They are useful assistants, not a complete protocol.

How does this protocol help with Google's E-E-A-T?

This protocol is designed specifically to address E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness). Stage 1 (Accuracy) and Stage 3 (Risk Audit) directly build Trustworthiness. Stage 2, which requires adding unique insights and firsthand knowledge, directly injects Experience and Expertise. Following the full protocol results in content that is authoritative by design.

Is this process overkill for a small business?

No, it's about scaling the process to your needs. A solo founder can run through the four stages on their own. A larger team can assign different stages to different people. The protocol's principles remain the same. Skipping these checks is riskier for a small business, as a single piece of inaccurate or low-quality content can do more damage to a young reputation.

---

Sources:

- Axios - Report on the growth of AI-generated articles as a percentage of web content.

- Josh Bersin - Analysis of a BBC and EBU study on error rates in AI responses to news queries.

- Higher Education Policy Institute - A 2025 UK survey detailing student sentiment and fears regarding generative AI.

- Articulate - Best practices and methods for systematically fact-checking AI-generated content.

- Stratton Craig - Guide for editors on identifying and correcting common AI writing flaws to add a human touch.

- AI21 - An overview of AI governance frameworks, including the NIST AI Risk Management Framework.

- Parseur - Data on how Human-in-the-Loop workflows improve AI accuracy, including the use of confidence scores.

- Google Search Central - Google's official documentation on its stance toward AI-generated content, prioritizing quality and E-E-A-T.