Ethical AI & Responsible Innovation: Foundations for Trust in Future Business

AI Summary

Ethical AI and responsible innovation are essential to building trust and competitive advantage in modern business. This article offers a clear framework to transform AI ethics from a compliance burden into a strategic asset.

Bottom Line:

Embracing responsible AI enhances transparency, reduces risks, and drives measurable business growth with higher profit margins and customer retention.

What You'll Learn:

- How to implement AI ethics frameworks that align with global standards.

- The importance of explainable AI for accountable and transparent decision-making.

- Strategies to close governance gaps and ensure compliance with AI regulations.

Best For:

Business leaders and managers seeking actionable solutions to integrate ethical AI practices and build sustainable trust with customers and stakeholders.

The rapid adoption of AI has created a critical tension. On one side, businesses are eager to unlock new efficiencies and capabilities. On the other, customers, employees, and regulators are demanding greater transparency and accountability. Many leaders see ethical AI as a complex compliance hurdle. The reality is much simpler. Responsible innovation is not a cost center. It is one of the most powerful strategic assets for building lasting trust and a significant competitive advantage.

This guide moves beyond the abstract principles of AI ethics. We will provide a practical framework for implementation, focusing on the tangible business outcomes that responsible AI delivers. You will learn how to navigate the core challenges, adopt actionable tools, and transform ethical considerations from a defensive necessity into a core driver of your company's growth.

The Tangible ROI of Responsible AI

Investing in ethical AI is a direct investment in your bottom line. While competitors focus on avoiding risk, forward-thinking companies are capturing measurable value. The data clearly shows that trust translates directly into business performance.

Consider the financial impact. Companies that prioritize ethical AI see up to 10% higher profit margins and 20% higher customer retention. These are not soft metrics. They represent real revenue and sustainable growth. The reason is simple: 62% of consumers report having more trust in companies that use AI ethically, and 85% are more likely to engage with those brands. This foundation of trust is crucial for building the kind of deep brand resonance that AI search engines are beginning to reward, where brand narratives resonate and are retained by AI engines as a measure of authority.

This shift moves ethical governance from a reactive compliance measure to a proactive value creation engine.

Compare current governance with a trust-driven AI approach—clear metrics show how ethical AI translates into higher trust, retention, and profit.

The Core Challenges: Navigating AI Ethics in Business

To build a responsible AI practice, you must first understand the primary challenges organizations face. These are not just technical problems. They are business problems that require a combination of technology, process, and cultural commitment to solve.

Bias and Fairness

AI models learn from data, and if that data reflects historical biases, the AI will amplify them. This can lead to unfair outcomes in critical areas like hiring, lending, and customer service, exposing your business to significant legal and reputational risk. Mitigation requires a multi-layered approach: auditing datasets for hidden biases, re-evaluating algorithmic models for fairness, and building diverse development teams who can spot cultural blind spots.

Transparency and Explainability (XAI)

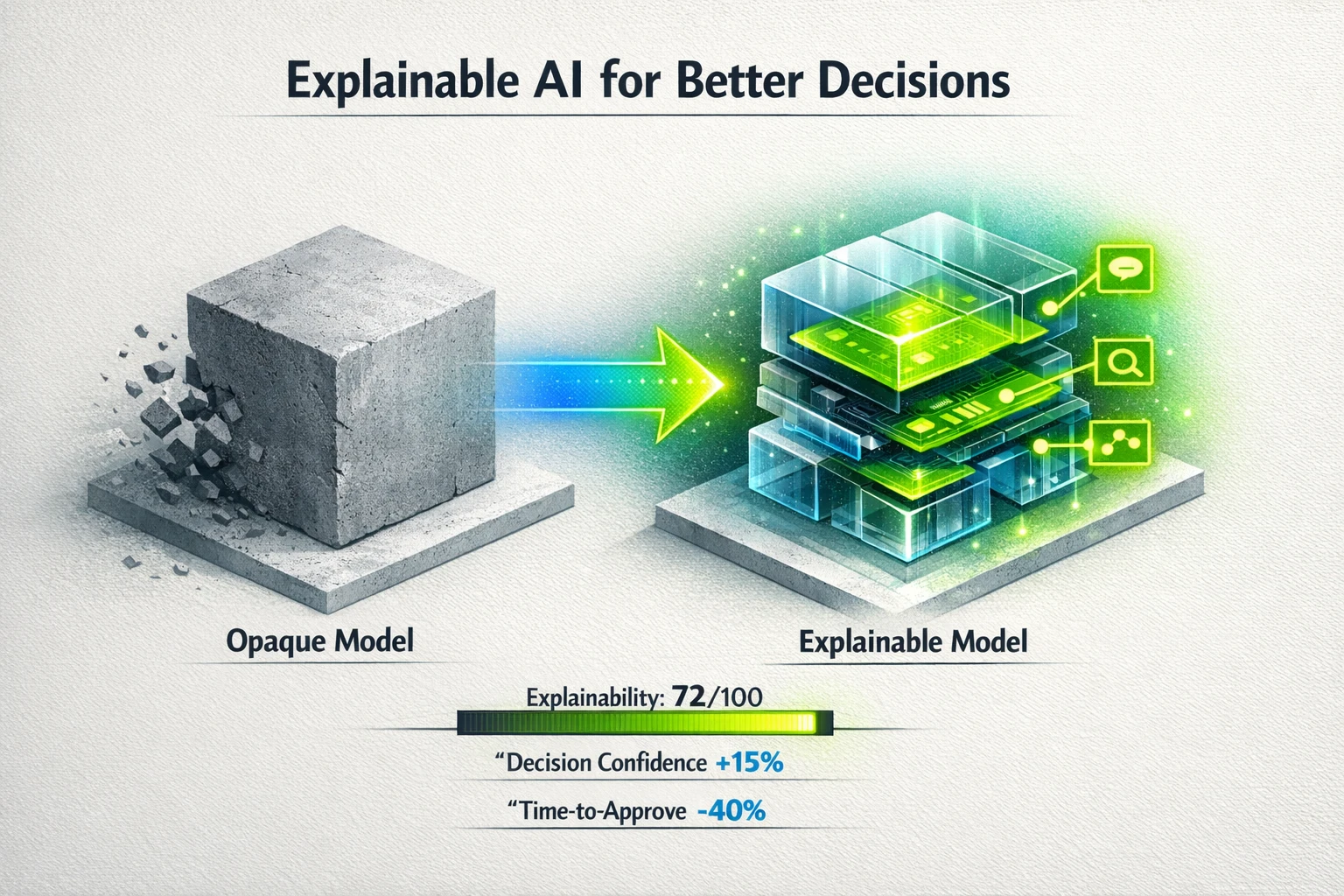

Many advanced AI models operate as "black boxes," making it impossible to understand how they reached a particular conclusion. This opacity erodes trust and makes it difficult to debug errors or prove compliance. Explainable AI (XAI) is the solution. It involves techniques and models that can articulate their reasoning in a human-understandable way. Implementing XAI is essential for accountability, but it comes with technical hurdles like algorithmic complexity and high computational costs that must be managed.

Privacy and Data Protection

AI systems are data-hungry, which creates inherent privacy risks. The collection, storage, and processing of vast amounts of personal information must comply with increasingly strict regulations like GDPR and CCPA. A failure to protect data can result in severe financial penalties and a permanent loss of customer trust. Privacy-preserving AI techniques and robust data governance are no longer optional.

Accountability and Governance

When an AI system fails, who is responsible? The lack of clear liability is a major governance challenge. Unfortunately, many organizations are dangerously behind. A staggering 54% of corporate boards do not list AI governance among their top five priorities. This leadership gap creates significant vulnerabilities. For instance, companies not directly impacted by the EU AI Act are 22 to 33 points behind their peers on every major AI control, creating a landscape of unmanaged risk.

Building Blocks of Trust: From Theory to Actionable Frameworks

Discussing principles is easy. The real work lies in operationalizing them. Moving from theory to practice requires concrete frameworks, practical tools, and a clear understanding of implementation.

Implementing an AI Ethics Framework

A robust framework provides the guardrails for responsible innovation. Start by establishing a set of core principles aligned with global standards, such as those developed by UNESCO. The next step is to integrate these principles into the entire AI lifecycle, from initial concept to deployment and monitoring. This includes conducting mandatory ethical impact assessments before launching any new AI project to identify potential risks. Your goal is to make ethical review a standard part of your development process, not an afterthought.

The Power of Explainable AI (XAI) in Decisions

For business leaders, XAI is about confidence. It transforms an opaque recommendation from a black-box model into a transparent, auditable decision. Imagine a loan application AI that doesn't just say "denied," but also provides the top three factors that led to its conclusion. This allows for human oversight, appeals, and continuous model improvement. While there are technical challenges, the business case is clear. XAI reduces risk, builds stakeholder trust, and enables your team to make better, more defensible decisions. The right tools demonstrate precisely how expert content is contributing to AI perception of brand authority and other key business drivers.

Visualize how explainable AI converts opaque models into actionable, higher-confidence decisions—showing explainability scores and time savings.

Closing the Gap in AI Auditing and Compliance

The governance gap in most organizations is alarming. Critical audit controls are often missing entirely. Research shows that 60% of companies lack AI anomaly detection, 78% are unable to validate their AI's training data, and an astonishing 33% have no audit trails whatsoever. This leaves them blind to model drift, hidden biases, and potential security breaches.

Implementing a dedicated AI auditing process is essential for closing these gaps. This involves using specialized tools to monitor model performance, validate data integrity, and maintain comprehensive logs. By establishing these controls, you create a system of record that can be used to demonstrate compliance with emerging regulations and build a culture of accountability.

Expose audit and compliance gaps with clear metrics—use hard percentages and readiness indicators to prioritize governance investments.

Future-Proofing Your Business with Responsible AI

The landscape of AI ethics and regulation is constantly evolving. A reactive approach will leave you perpetually behind. A proactive strategy focused on responsible innovation will ensure your business remains resilient and competitive.

Key trends to prepare for include:

- Generative AI Ethics: Misinformation, copyright infringement, and deepfakes present new challenges. Proactive policies and detection tools are needed to manage these risks.

- Global Regulatory Convergence: While 82% of U.S. organizations feel no direct regulatory pressure yet, global standards like the EU AI Act will have cascading effects. Building adaptable, globally compliant systems now will prevent costly retrofitting later.

- The Human-AI Partnership: The future of work is not about replacement. It is about augmentation. Emphasize human oversight, continuous training, and leveraging AI to enhance human judgment. This approach requires smart systems, including tools like an AI internal linking agent that can handle complex information architecture while freeing up human strategists.

At PageBody AI, we are committed to these principles. We build solutions that are not only powerful but also transparent, efficient, and accountable. Our mission is to empower businesses to harness AI responsibly, turning advanced technology into a reliable engine for growth.

Frequently Asked Questions

Isn't implementing ethical AI too expensive for a small or medium-sized business?

This is a common misconception. The cost of inaction, including potential fines, reputational damage, and loss of customer trust, is far greater than the investment in a responsible AI framework. The key is to start with a scalable approach. Focus first on high-risk areas and leverage the tangible ROI, such as improved customer retention and higher profit margins, to fund further initiatives.

Where do we even start with building an AI ethics framework?

The best first step is to form a small, cross-functional team including members from leadership, legal, and technology. Your initial goal is simple: conduct an inventory of all current and planned AI systems in your organization. This will allow you to identify the highest-risk applications and prioritize your efforts on creating guidelines for those specific use cases first.

How can we ensure our AI models remain unbiased over time?

Bias mitigation is not a one-time fix. It requires continuous monitoring. AI models can "drift" as new data is introduced, causing biases to emerge over time. Implementing automated monitoring tools that track model performance and fairness metrics is crucial. These tools can alert you to deviations, allowing you to retrain or adjust the model before it causes significant harm.

What's the real risk of ignoring AI ethics if we're not in a regulated industry yet?

Regulation is only one piece of the puzzle. The biggest risks are often commercial and reputational. Customers are increasingly savvy about data privacy and algorithmic fairness. A single high-profile incident of AI bias can destroy years of brand equity. Furthermore, ethical AI is becoming a competitive differentiator. Businesses that build trust will attract more customers and top talent, leaving others behind regardless of formal regulations.

Sources:

- IBM Institute for Business Value - Provided data on the business case and ROI of ethical AI.

- Kiteworks 2024 Data Security Forecast - Source for statistics on AI governance gaps and audit control deficiencies.

- UNESCO Recommendation on the Ethics of AI - Outlines the first global standard-setting framework for AI ethics.

- MIT Sloan Management Review - Offered insights on building an organizational culture around AI ethics.

- Harvard Professional Development - Provided a leadership perspective on AI ethics as a competitive advantage.

- CFA Institute Report on Explainable AI - Detailed the technical challenges and importance of XAI implementation.

- KPMG Trust in Artificial Intelligence Report - Provided context on consumer trust levels in AI systems.